Data analysis programs hive doot

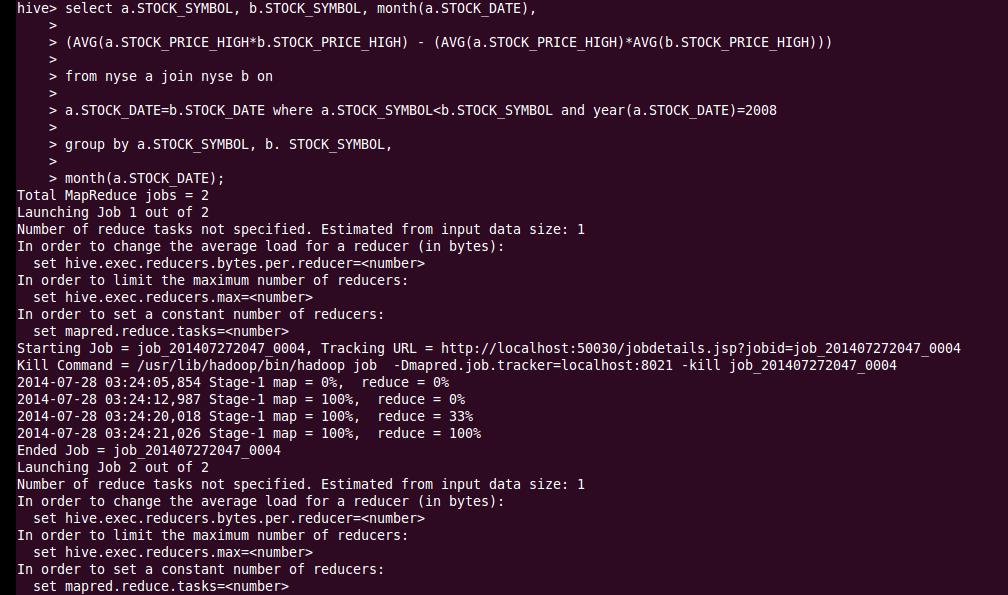

Next step is to load the data in the hive table that we created. Once the table structure is created we can easily view it using Hive describe clause. CREATE EXTERNAL TABLE if not exists airlines ( Year int, Month int, DayofMonth int, DayOfWeek int, DepTime int, CRSDepTime int, ArrTime int, CRSArrTime int, UniqueCarrier string, FlightNum int, TailNum string, ActualElapsedTime int, CRSElapsedTime int, AirTime int, ArrDelay int, DepDelay int, Origin string, Dest string, Distance int, TaxiIn int, TaxiOut int, Cancelled int, CancellationCode string, Diverted string, CarrierDelay int, WeatherDelay int, NASDelay int, SecurityDelay int, LateAircraftDelay int ) row format delimited fields terminated by ',' lines terminated by ' \n ' stored as TEXTFILE : Here we’re creating an external hive table, so that the same dataset can be used for other operations(pig, Hbase) as well. Schema-on-Read vs Schema-on-Write from Amr Awadallah Create External Table This allows you to innovate quickly by asking different and more powerful questions after storing the data. “ Schema-on-read” means you do not need to know how you will use your data when you are storing it. Note that at this point, only the logical structure is defined, data is still not loaded in the table. In the background we are simply creating a logical table structure over the HDFS dataset using Hive. Now we will create a schema for the dataset and then load all the. $~ hadoop fs -put /local_path/file_pattern * /path/to/your/directoryĪnother easier way is upload it using HUE web GUI(from host OS). Now tranfer the data files from guest OS to HDFS using command line and hadoop fs commands. To transer data from host to guest OS, we can simply create a shared folder in host OS and access it from guest OS. In order to load the data in HDFS we have to move the data from host OS to guest OS first and then from guest OS’s file-system to HDFS. Currently the data is in the host OS’s file system. Once we get the data, our next task is to load it in HDFS for further analysis.

Data analysis programs hive doot code#

Variable descriptions Name : Description 1 Year : 1987 - 2008 2 Month : 1 - 12 3 DayofMonth : 1 - 31 4 DayOfWeek : 1 ( Monday ) - 7 ( Sunday ) 5 DepTime : actual departure time ( local, hhmm ) 6 CRSDepTime : scheduled departure time ( local, hhmm ) 7 ArrTime : actual arrival time ( local, hhmm ) 8 CRSArrTime : scheduled arrival time ( local, hhmm ) 9 UniqueCarrier : unique carrier code 10 FlightNum : flight number 11 TailNum plane : tail number 12 ActualElapsedTime : in minutes 13 CRSElapsedTime : in minutes 14 AirTime : in minutes 15 ArrDelay : arrival delay, in minutes 16 DepDelay : departure delay, in minutes 17 Origin : origin IATA airport code 18 Dest : destination IATA airport code 19 Distance : in miles 20 TaxiIn : taxi in time, in minutes 21 TaxiOut : taxi out time in minutes 22 Cancelled : was the flight cancelled ? 0 or 1 23 CancellationCode : reason for cancellation ( A = carrier, B = weather, C = NAS, D = security ) 24 Diverted : 1 = yes, 0 = no 25 CarrierDelay : in minutes 26 WeatherDelay : in minutes 27 NASDelay : in minutes 28 SecurityDelay : in minutes 29 LateAircraftDelay : in minutes

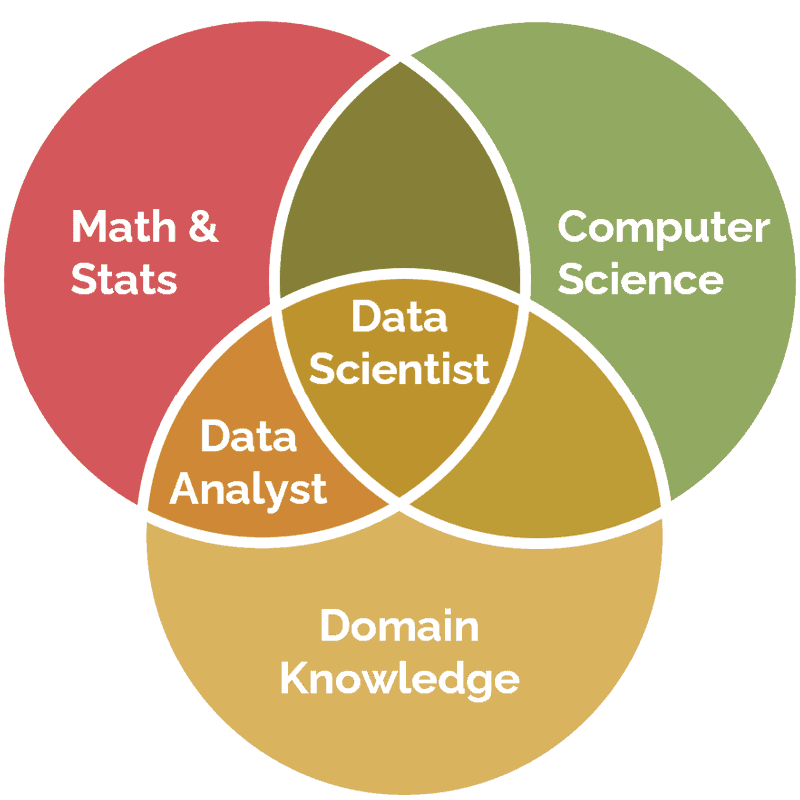

It’s a huge dataset(2 decades old) which contains around 120 million rows of flight details and sums up to about 12GB when uncompressed.įollowing is the detailed description of all the variables used in the dataset. Can you detect cascading failures as delays in one airport create delays in others? Are there critical links in the system?.How well does weather predict plane delays?.How does the number of people flying between different locations change over time?.When is the best time of day/day of week/time of year to fly to minimise delays?.This dataset can be used to work on cool travel ideas like: The Airline On-Time Performance Data, “contains on-time arrival data for non-stop domestic flights by major air carriers, and provides such additional items as departure and arrival delays, origin and destination airports, flight numbers, scheduled and actual departure and arrival times, cancelled or diverted flights, taxi-out and taxi-in times, air time, and non-stop distance.” Tools required: Any host system(I’m using windows7), cloudera’s CDH 5.4.0 quickstart virtual instance Introduction Knowledge required: SQL and basic hadoop(hdfs, hive) concepts This post is about analysing 20 million rows of flight on-time/delay performance using hadoop and hive.